27 Milestones in the History of Quantum Computing

(Forbes) Gil Press, a senior contributor to Forbes has drawn up a short history of the evolution of quantum computing. IQT-News has summarized the list here, but readers may want to click through to Forbes and read through the entire list of 27 significant developments and background comments:

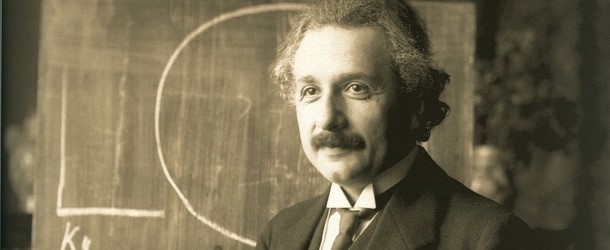

1905 Albert Einstein explains the photoelectric effect—shining light on certain materials can function to release electrons from the material—and suggests that light itself consists of individual quantum particles or photons.

1924 The term quantum mechanics is first used in a paper by Max Born.

1935 Erwin Schrödinger, discussing quantum superposition with Albert Einstein and critiquing the Copenhagen interpretation of quantum mechanics, develops a thought experiment in which a cat (forever known as Schrödinger’s cat) is simultaneously dead and alive; Schrödinger also coins the term “quantum entanglement”.

1980 Paul Benioff of the Argonne National Laboratory publishes a paper describing a quantum mechanical model of a Turing machine or a classical computer, the first to demonstrate the possibility of quantum computing.

1985 David Deutsch of the University of Oxford formulates a description for a quantum Turing machine.

1994 The National Institute of Standards and Technology organizes the first US government-sponsored conference on quantum computing.

2002 The first version of the Quantum Computation Roadmap, a living document involving key quantum computing researchers, is published.

2011 The first commercially available quantum computer is offered by D-Wave Systems.

2012 1QB Information Technologies (1QBit), the first dedicated quantum computing software company, is founded.

2018 The National Quantum Initiative Act is signed into law by President Donald Trump, establishing the goals and priorities for a 10-year plan to accelerate the development of quantum information science and technology applications in the United States.

2019 Google claims to have reached quantum supremacy by performing a series of operations in 200 seconds that would take a supercomputer about 10,000 years to complete.