Latest quantum simulation targets baryon particles

Behold the baryon, fundamental quantum particle, an ingredient in almost all matter we know of, and a likely product of the Big Bang that created our universe. To learn much about them, they usually need to be smashed in a particle accelerator like the Large Hadron Collider in Switzerland.

Until now. A team of researchers led by a faculty member of the University of Waterloo Institute for Quantum Computing (IQC) in Canada, recently performed the first-ever simulation of baryons on a quantum computer.

“This is an important step forward – it is the first simulation of baryons on a quantum computer ever,” Christine Muschik, an IQC faculty member, said. “Instead of smashing particles in an accelerator, a quantum computer may one day allow us to simulate these interactions that we use to study the origins of the universe and so much more.”

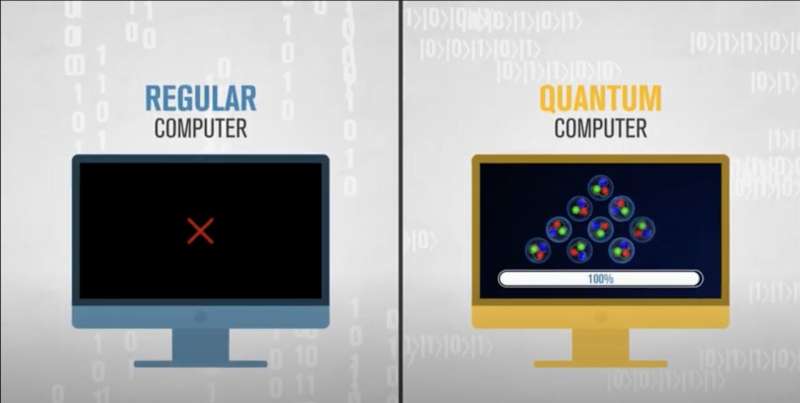

The simulation also is a big step for quantum computing, showing us the type of significant simulations quantum computers will be capable of in the future. They inevitably will be able to tackle problems that the best classical supercomputers can’t solve, and as this simulation may suggest, help bypass the need for massive atom smashers, which have become targets of increasing criticism over the expense and waste involved in building them, with relatively little return on the investment in the form of major discoveries.

Simulations in general are key to advancing the quantum sector. In recent days, we’ve been hearing about simulations in which classical computers are used to simulate quantum computers, projects of increasing frequency which are valuable in their own right at a time when there are not a lot of quantum computers available. IonQ just last Friday featured a blog post about the value of classical quantum simulators, and Nvidia last week announced the largest ever simulation of a quantum algorithm.

Here’s more detail about the achievement of Muschik’s team from a University of Waterloo press release:

Muschik leads the Quantum Interactions Group, which studies the quantum simulation of lattice gauge theories. These theories are descriptions of the physics of reality, including the Standard Model of particle physics. The more inclusive a gauge theory is of fields, forces, particles, spatial dimensions and other parameters, the more complex it is—and the more difficult it is for a classical supercomputer to model.

Non-Abelian gauge theories are particularly interesting candidates for simulations because they are responsible for the stability of matter as we know it. Classical computers can simulate the non-Abelian matter described in these theories, but there are important situations—such as matter with high densities—that are inaccessible for regular computers. And while the ability to describe and simulate non-Abelian matter is fundamental for being able to describe our universe, none has ever been simulated on a quantum computer.

Working with Randy Lewis from York University, Muschik’s team at IQC developed a resource-efficient quantum algorithm that allowed them to simulate a system within a simple non-Abelian gauge theory on IBM’s cloud quantum computer paired with a classical computer.

With this landmark step, the researchers are blazing a trail towards the quantum simulation of gauge theories far beyond the capabilities and resources of even the most powerful supercomputers in the world.

As scientists develop more powerful quantum computers and quantum algorithms, they will be able to simulate the physics of these more complex non-Abelian gauge theories and study fascinating phenomena beyond the reach of our best supercomputers.