Machine Learning at the Quantum Lab

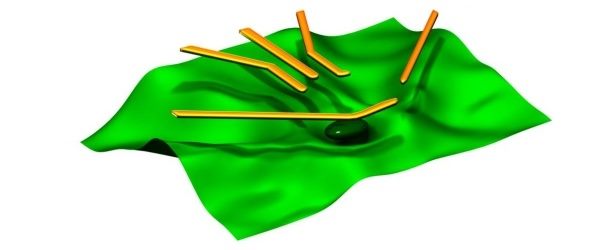

(EurekaAlert) For several years, the electron spin of individual electrons in a quantum dot has been identified as an ideal candidate for the smallest information unit in a quantum computer, otherwise known as a qubit.

Now, scientists from the Universities of Oxford, Basel, and Lancaster have developed an algorithm that can help to automate this process. Their machine-learning approach reduces the measuring time and the number of measurements by a factor of approximately four in comparison with conventional data acquisition.

“For the first time, we’ve applied machine learning to perform efficient measurements in gallium arsenide quantum dots, thereby allowing for the characterization of large arrays of quantum devices,” says Dr. Natalia Ares from the University of Oxford. “The next step at our laboratory is now to apply the software to semiconductor quantum dots made of other materials that are better suited to the development of a quantum computer,” adds Professor Dr. Dominik Zumbühl from the Department of Physics and the Swiss Nanoscience Institute at the University of Basel. “With this work, we’ve made a key contribution that will pave the way for large-scale qubit architectures.”